April 17, 2026

Artificial intelligence is no longer a future capability — it is already embedded in hiring decisions, customer service workflows, financial risk scoring, and operational processes across organizations of every size. Yet the governance of those systems has not kept pace. Research consistently reveals a stark asymmetry: widespread AI adoption accompanied by largely absent or immature governance frameworks. This is the problem Aslan AI was built to solve.

The Scale of the Governance Crisis

The numbers are sobering. According to one analysis, 80% of organizations now use AI in operations, yet only 14% have enterprise-level AI governance frameworks in place (Epicenter, 2026). A separate review found that nearly two-thirds of organizations adopted generative AI without establishing proper governance controls. Perhaps most striking, 95% of companies worldwide had not yet implemented comprehensive AI governance frameworks as of 2024 — leaving them exposed to regulatory, ethical, and operational risk (AI Law Review, 2024).The governance gap is not merely a compliance oversight. Mäntymäki et al. (2022) define organizational AI governance as "rules, practices, processes, and tools that organizations implement to govern their AI systems" in alignment with their strategies, objectives, and values. When that governance is absent, organizations lose the ability to ensure their AI is operating within legal, ethical, and operational boundaries — creating what scholars describe as a breakdown of accountability at the organizational level (Mäntymäki et al., 2022).

The Manual Governance Trap

Even organizations that recognize the governance problem often find themselves trapped in manual, fragmented processes. Birkstedt et al. (2023) — in their systematic review of AI governance literature published in Internet Research — identify a persistent gap between governance policy formulation and actual implementation, noting that operational constraints, insufficient tooling, and resource limitations cause organizations to defer or incompletely execute governance documentation requirements (Birkstedt et al., 2023).This operational burden is not just inefficient — it is dangerous. When governance teams are overwhelmed, reviews become inconsistent, documentation gets skipped, and high-risk edge cases receive insufficient scrutiny. Batool et al. (2025), in a systematic literature review published in AI and Ethics (Springer), identify insufficient incentivization, resource constraints, and poor workflow integration as primary barriers to effective AI governance documentation — noting that practitioners often leave critical governance questions unanswered rather than invest time in proper documentation processes (Batool et al., 2025).

Why Small and Mid-Sized Organizations Face Disproportionate Risk

The governance burden falls hardest on organizations without large dedicated compliance teams. The NIST AI Risk Management Framework explicitly acknowledges that "small to medium-sized organizations managing AI risks or implementing the AI RMF may face different challenges than large" enterprises (NIST, 2023). Resource constraints, limited access to specialized AI governance expertise, and the complexity of aligning multiple regulatory frameworks — NIST AI RMF, ISO 42001, EU AI Act — simultaneously create steep barriers for any organization that cannot staff a dedicated AI governance function.

Research on AI governance implementation confirms this structural disadvantage. Laato et al. (2024), writing in Humanities and Social Sciences Communications (Nature Publishing Group), found that organizations at early stages of AI governance maturity are characterized by reactive rather than proactive risk management, with governance activities triggered by incidents rather than systematic oversight — precisely the "Foundational" maturity profile seen across many organizations beginning to deploy AI at scale (Laato et al., 2024). Meanwhile, the EU AI Act's compliance burden — requiring impact assessments, bias testing, and active monitoring for high-risk AI systems — disproportionately impacts smaller organizations that must build governance infrastructure from scratch rather than adapt existing enterprise systems (European Parliament, 2024).

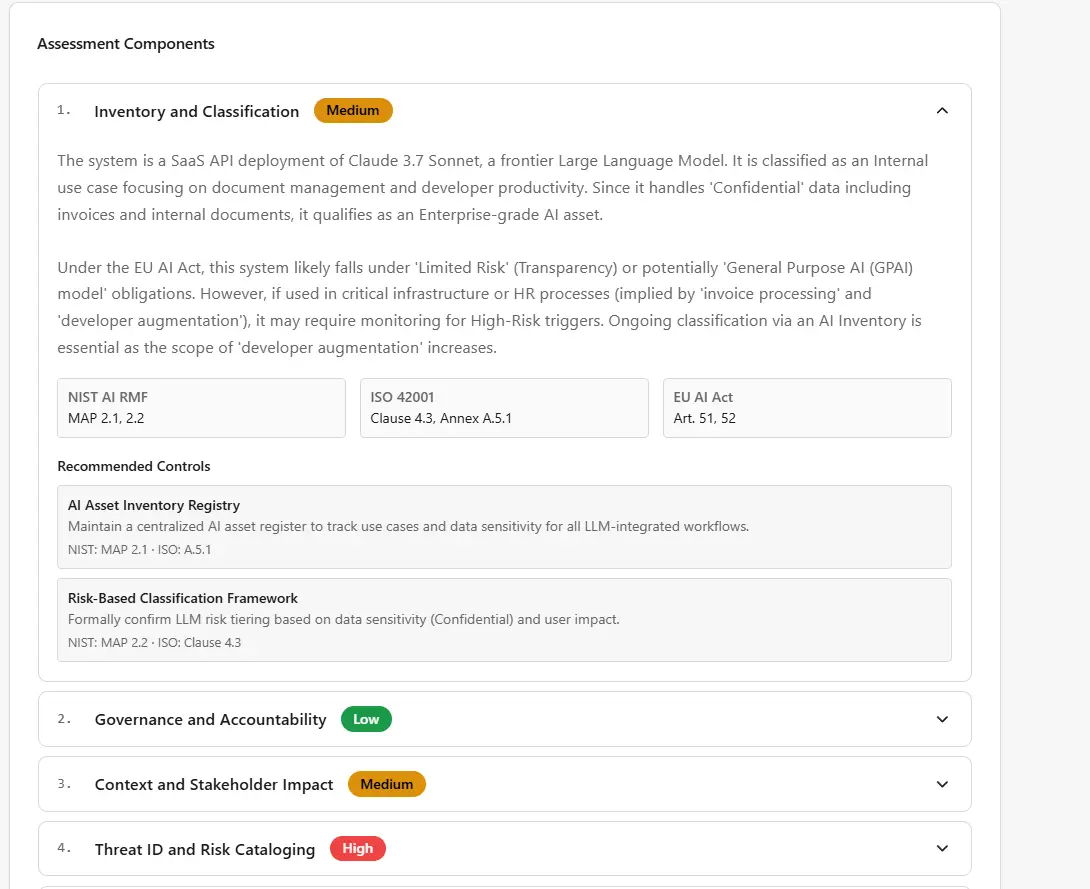

The real-world consequences of these gaps are visible in practice. A representative organizational AI audit — the kind Rhindon Cyber’s Aslan AI generates in minutes — reveals the typical pattern: critical-risk AI systems operating without completed impact assessments; high-risk systems missing bias assessments required by EU AI Act Article 10 and the NIST AI RMF MAP function; production AI systems running without active monitoring; and entire control frameworks that have never been tested. Organizations at a "Foundational" maturity level operate in a reactive posture — discovering problems through incidents rather than structured oversight.Type your paragraph here

What Rhindon Cyber's Aslan AI Delivers

Aslan AI is the embedded intelligence layer inside Rhindon Cyber's AI Risk & Integrity Cloud (RAIC), designed to collapse the gap between AI deployment speed and governance readiness. Where manual governance processes consume weeks of analyst time, Aslan AI generates the governance documents a program depends on — in seconds, not days.

The capability set addresses every major governance documentation bottleneck:

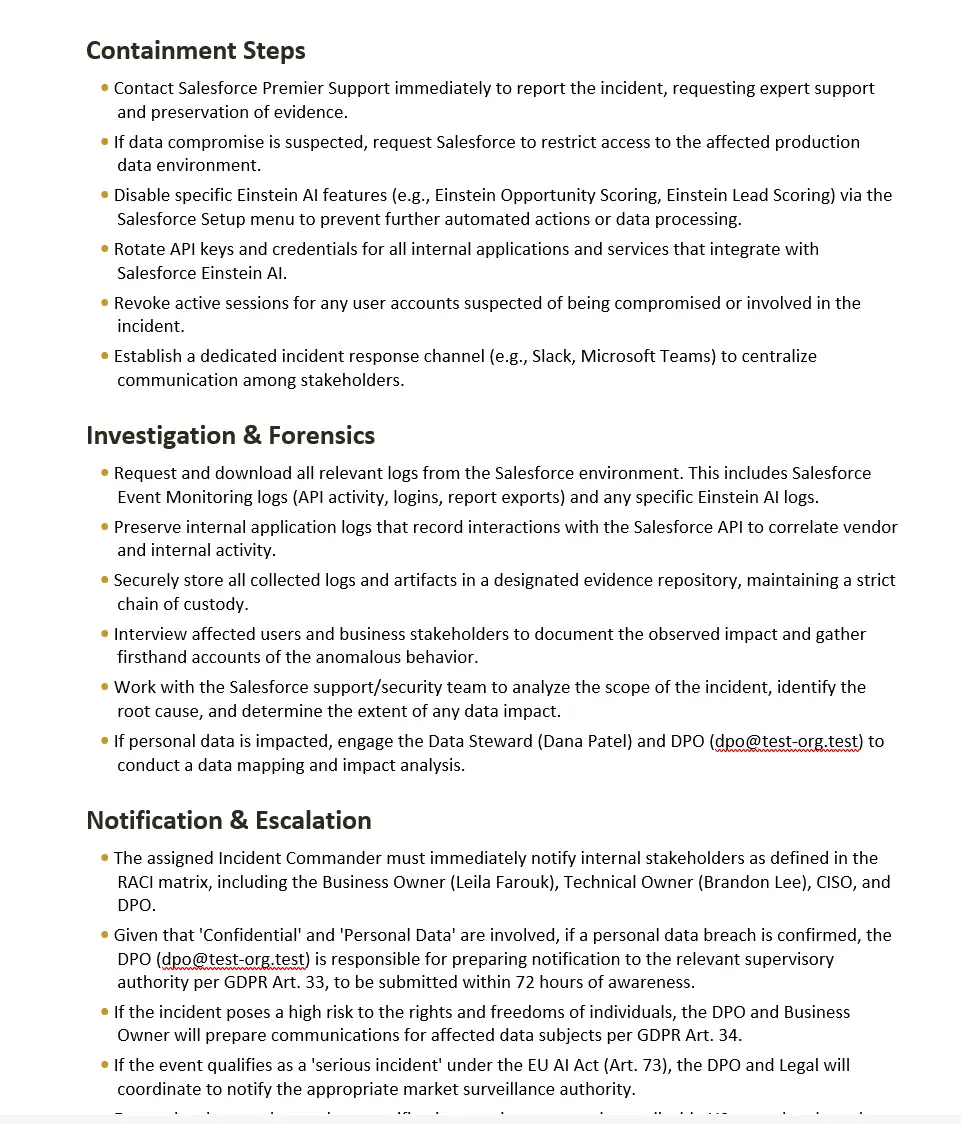

Every output is reviewable, editable, exportable as a PDF or Word document, and fully audited end-to-end. The platform is tier-gated, tenant-isolated with 75 security controls across 13 security domains, and available within the Enterprise tier of RAIC. Governance documentation that once required weeks of analyst time becomes a same-day deliverable grounded in the organization's own live data — delivering precisely the automation-driven approach that scholars identify as essential to closing the speed asymmetry between AI deployment and governance review (Veale et al., 2018). As an example, below is a snippet from an Aslan AI incident response plan that was created for Salesforce Einstein AI:

The Stakes of Inaction

The regulatory environment is tightening. Thomson Reuters (2026) found that AI disclosure among S&P 500 companies jumped from 12% in 2023 to 72% in 2025, as investors began treating AI governance as a material governance factor. Only 38% of U.S. companies have published AI policies despite being the world's largest AI adopters — a disparity that signals emerging competitive and regulatory risk. Scholars warn that without structured, embedded governance, organizations risk "inconsistencies, unforeseen risks, and a fundamental breakdown in trust, both internally and externally" (Mäntymäki et al., 2022).

The NIST AI RMF's GOVERN function makes the organizational stakes explicit: accountability mechanisms, roles, and incentive structures must be actively maintained, not assumed (NIST, 2023). ISO 42001 §8.2 requires documented AI impact assessments. EU AI Act Articles 9 and 10 mandate risk management and data governance reviews for high-risk systems. Across every major framework, the compliance clock is already running.

Get Started

Aslan AI is purpose-built for the organization that is deploying AI but hasn't yet built the governance infrastructure to match.

See it in action at https://raic.rhindoncyber.com/demo

References

Batool, A., Zowghi, D., & Bano, M. (2025). AI governance: A systematic literature review. AI and Ethics. Springer. https://link.springer.com/article/10.1007/s43681-025-00716-0

Birkstedt, T., Minkkinen, M., Tandon, A., & Mäntymäki, M. (2023). AI governance: Themes, knowledge gaps and future agendas. Internet Research, 33(7), 133–167. https://doi.org/10.1108/INTR-01-2022-0042

Epicenter. (2026). AI governance framework: Why 89% of enterprises lack it. https://epicenter.tech/ai-governance-framework-gap-why-enterprises-lack-it/

European Parliament. (2024). Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Official Journal of the European Union. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689

Laato, S., Tiainen, M., Najmul Islam, A. K. M., & Mäntymäki, M. (2024). AI governance in a complex and rapidly changing regulatory environment. Humanities and Social Sciences Communications, 11, 1151. https://doi.org/10.1057/s41599-024-03560-x

Mäntymäki, M., Minkkinen, M., Birkstedt, T., & Viljanen, M. (2022). Responsible artificial intelligence governance: A review and research framework. The Journal of Strategic Information Systems, 34(2), 101885. https://doi.org/10.1016/j.jsis.2024.101885

National Institute of Standards and Technology. (2023). Artificial intelligence risk management framework (AI RMF 1.0) (NIST AI 100-1). U.S. Department of Commerce. https://doi.org/10.6028/NIST.AI.100-1

Thomson Reuters. (2026, February). New data reveals AI governance gap between policy and practice. https://www.thomsonreuters.com/en-us/posts/sustainability/ai-governance-gap-esg-risks/

Veale, M., Van Kleek, M., & Binns, R. (2018). Fairness and accountability design needs for algorithmic support in high-stakes public sector decision-making. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems (pp. 1–14). ACM. https://doi.org/10.1145/3173574.3174014